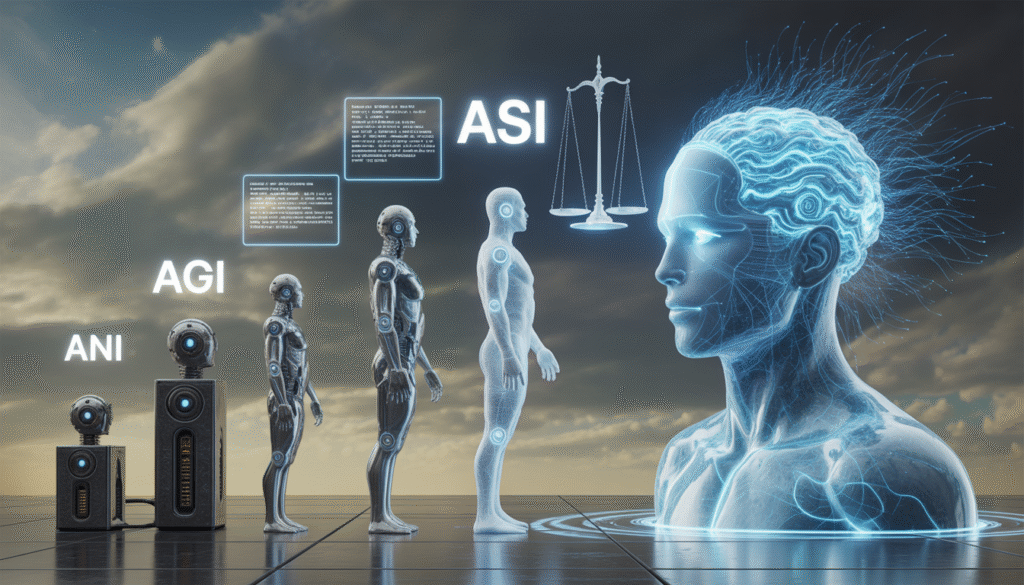

Artificial Intelligence (AI) has become an important tool for the ongoing transformation of modern culture, business, government, and daily life. It impacts how people live and work together. However, there is no single description of AI. AI exists along a spectrum of abilities traditionally referred to as Artificial Narrow Intelligence (ANI), Artificial General Intelligence (AGI), and Artificial Superintelligence (ASI). They reflect different levels of technological achievement, as well as significant changes in our understanding of what constitutes intelligence.

Transitioning from ANI to ASI means moving from automating tasks with tools to developing autonomous entities capable of self-governance. Therefore, there are legal ramifications associated with this transition. In the past, laws have been viewed as systems created for human beings. Therefore, all legal systems have been developed around the basic assumption that humans are agents who make decisions based on their capacity for accountability and rationality. The growing autonomy and capabilities of AI systems, including their ability to operate without human intervention, pose an increasing challenge to the foundational assumptions on which human law has developed. This article explores the characteristics of ANI, AGI, and ASI and examines possible legal frameworks that will continue to grow and evolve alongside AI systems.

Artificial Narrow Intelligence (ANI): Present Day Actuality

Definition of ANI

Also known as weak AI, ANI currently refers to computer programs built to carry out task-specific functions within predefined confines. Therefore, from this definition, it is easy to see that ANI has many limitations. This limitation is in terms of generalizability (the ability to apply knowledge from one area or type of application/situation to another), but is normally effective for accomplishing its task and often outperforms humans in many situations.

Examples of ANI include NLP (Natural Language Processing), recommendation systems/algorithms, self-driving vehicles and credit card fraud detection systems. Most ANI systems use machine learning for identifying patterns from very large datasets, particularly using deep learning.

Functional Characteristics

The functional characteristic of ANI is that it performs specific tasks. They do not have a context or understanding of what they are doing and are data-dependent. They do not have comprehension in the sense of human comprehension. The apparent type of intelligence simulated by ANI relies on statistical correlations and pattern recognition, not on any real understanding.

In addition, ANI systems can learn or can be made more sophisticated via learning mechanisms. However, ANI will always be confined to whatever was originally defined by the design of the ANI system (it can only learn to improve within its own constraints). ANI cannot identify new objectives on its own, nor can it operate in any significant way outside of its prescribed domain.

Legal Challenges of ANI

Liability and Accountability

Due to their ability to operate independently and make decisions, the assignment of liability for damage or harm resulting from the use of ANIs presents new and complicated challenges for current civil and criminal law. For instance, with the introduction of self-driving vehicles, different forms of liability will likely need to be assigned to different parties, including the manufacturers, developers, and users of such vehicles. This complicated web of liability will ultimately lead to ambiguity and disputes.

Traditionally, legal frameworks for determining liability require identifying a human actor. However, once the product is rendered autonomously, this traditional conception of holding a responsible actor accountable is no longer applicable. The existing legal framework primarily holds AI as a product subject to product liability, which may not be sufficient for products that learn and evolve.

Data Protection and Privacy

There are serious concerns about the protection of personal privacy and data security with any type of ANI or AI system. To develop ANI technologies, large amounts of personal and sensitive information are needed. Data protection and privacy laws, such as the GDPR and India’s Digital Personal Data Protection Act, seek to govern the collection, processing, and storage of data.

Nonetheless, there are still significant challenges in protecting individuals’ rights to privacy and the protection of their data under existing laws. Data can often be used to infer sensitive personal information based solely on their lack of apparent sensitivity based on the information collected.

Therefore, the question of whether individuals were informed and provided consent for their personal data to be collected and used will likely continue to arise. Particularly, in instances where data crosses the border from one jurisdiction to another, where the laws governing data protection are fundamentally different from the laws of each jurisdiction.

Bias and Discrimination in Algorithms

Algorithmic bias has emerged as a major legal and ethical issue in the context of AI systems. A central driving force behind bias in artificial intelligence (AI) systems is the historical data upon which they are trained, causing them to perpetuate and magnify existing inequalities in society. Examples of the manifestation of bias in AI include employment and hiring decisions, credit and lending decisions and criminal justice.

From a legal perspective, such bias raises several questions about compliance with anti-discrimination laws. However, it is often very difficult to demonstrate bias in an AI system because they are highly opaque. In such light, many have called for greater transparency and explainability of AI systems, and for better auditing capabilities.

Intellectual Property Issues

Provision of authorship and ownership has been disrupted by the emergence of AI-generated content. The prevailing rule in most legal systems is that only human beings are entitled to be the owners of intellectual property rights as the author or creator of the work. However, as AI systems produce significantly improved and sophisticated works, this prevailing view is being challenged.

Moreover, there is uncertainty regarding whether it is the developer or the user of an AI system, or whether the AI system itself is the creator of the AI-generated output. While current legal systems will typically attribute ownership of an AI-generated work to the human being who developed or used the AI system, as time progresses, this position may not continue to be tenable.

Artificial General Intelligence (AGI): The Transitional Frontier

Conceptualizing AGI

Artificial General Intelligence (AGI) can perform all the cognitive functions that humans are capable of. This system will represent a dramatic progression from the level of Artificial Narrow Intelligence. AGI would have the ability to perform any intellectual task that an individual can do, such as reasoning, learning, problem solving and adapting. This applies across any domain of skill. Unlike ANI, which is limited to a specific type of task, AGI is expected to offer people with the ability to utilize and adapt their intelligence in any capacity. Thus, having a form of generalized intelligence. Currently, AGI is theoretical. However, the consistent evolution of the AI field and the results of AI experiments suggest that AGI may be achievable.

Functional and Philosophical Dimensions

The advent of AGI poses substantial challenges to our understanding of intelligence and consciousness, particularly regarding the potential for machines to emulate human-level cognition. AGI would mean that machines can exhibit functional capacities comparable to those associated with human-level reasoning. This blurring of distinctions creates significant philosophical ambiguity regarding human vs. artificial cognition. These issues also generate significant philosophical ambiguity about legal definitions of personhood, objecthood, agency, and application. By creating uncertainty about these categories of legal distinction, AGI substantially destabilizes current legal thinking on them.

Legal Implications of AGI

AGI and Legal Personhood

One of the most significant questions about AGI is whether it should be granted legal personhood, i.e., the ability to own property, engage in contracts, etc. Legal personhood, which gives an entity certain rights and legal responsibilities, allows for participation in legal processes, such as lawsuits or arbitration.

If AGI were to be granted legal personhood, it would be a monumental shift in our current legal theories. It would require that AI systems be recognized as entities that can possess rights and have obligations. In addition, there are ethical questions raised about placing machines on the same level as humans.

AGI Criminal Liability and Intent

The basis of criminal law is mens rea or “guilty mind.” Since AGI may be capable of autonomous decision-making, determining intent to commit a crime becomes very complicated. Can a machine form intent? If so, how do we assess that intent?

Even if we could establish intent, traditional methods of punishing people would likely not work on AGI, so we would need to establish new legal entities and definitions of wrongdoing by non-human agents.

Impact on Employment and Economic Structures

AGI has the power to create major alterations in the labour market. Unlike ANI, which automates specific tasks, AGI could replace entire professions. This raises significant legal and economic challenges.

Policies would need to be developed by governments, including a potential universal basic income, retraining programs for new jobs and alternative labour regulations. The nature of employment itself could be in question.

Contractual Capability

If AGI can understand or negotiate the terms of an agreement, they may have the legal capacity to enter into a contract. This raises concerns about consent, enforceability and liability – all things that must be looked at from a legal perspective under AGI. Every legal system will need to assess AGI’s capacity to act as an agent for the human party to an agreement or as an independent entity. This will have an impact on the notion of determining that all parties are aware and have agreed to the terms of the agreement.

Artificial Superintelligence (ASI): The Hypothetical Apex

What is ASI?

ASI stands for Artificial Super Intelligence and refers to systems that will exceed the level of human intelligence across all domains, including cognitive, creative, emotional and strategic reasoning. ASI is often associated with the concept of the technological singularity.

While ASI is still largely theoretical, it is worth taking seriously due to its potential impact.

Characteristics and Risks

An ASI system would have the ability to recursively improve itself, resulting in a rapid and potentially uncontrollable rate of increase in intelligence. This creates issues related to alignment, control and existential risk.

An ASI system operating beyond normal human comprehension poses a unique challenge for the governance and regulation of such a system.

Legal Implications of ASI

Existential Risk and Precautionary Regulation

The emergence of ASI raises questions about the risk of human extinction or catastrophic outcomes resulting from its use. There is a need for legal frameworks to use a precautionary approach that would have strict limitations on the development and deployment of ASI, which may include requiring a licensing regime, safety testing, and international oversight.

Global Governance and International Law

As ASI will have global implications, it cannot be addressed solely through national regulations. Instead, countries will need to cooperate to develop international treaties and agreements like those that exist on nuclear weapons and climate change. Geopolitical elements may slow or undermine efforts to formulate and implement international agreements because individual nation-states are competing against one another for technological dominance.

Concentration of Power

The development of ASI is likely to occur in only a few globally influential settings, such as a few countries or large, multi-national corporations. This creates potential problem areas of monopolistic control and the ability of these relatively few organizations to abuse their power. Legal frameworks must address these areas of competition, access, and accountability to limit markets from being too heavily consolidated and allow for the development of levels and structures of ASI that would be for the benefit of all humans.

Redefining Human Rights

ASI challenges the very foundation of human rights. If machines surpass human intelligence, the basis for privileging human interests may be questioned. This could lead to the emergence of new legal paradigms that extend rights beyond humans.

Comparative Perspective: From Tool to Autonomous Entity

The transition from ANI to ASI demonstrates a transition from tools to autonomous agents. Additionally, ANI systems operate as tools under human control, AGI is a potential for autonomous reasoning, while ASI represents entities developed that are likely to exceed human capability completely. This changing landscape also requires an equivalent evolution of existing legal frameworks. Traditional law is usually written with human actors in mind. Thus, using these laws to regulate autonomous technologies may prove ineffective.

Toward Adaptive Legal Frameworks

To address the challenges associated with the emergence of AI, new legislation will need to be created with a proactive and adaptive approach. Such legislation will require the development of specialized regulations for AI, collaboration with other fields and continual monitoring of technological advancements.

Furthermore, there are three important areas in which legislation must address: transparency, accountability, and ethical alignment. Legislation will now have to balance the dual goals of supporting innovation while managing risk to ensure that the development of AI technology is beneficial to society and minimize any damage.

Law in the Era of Intelligent Machines

AI has developed from being Artificial Narrow Intelligence to Artificial General Intelligence and eventually to a potential Artificial Super Intelligence. Therefore, the transition to each of these stages represents one of the most significant transformations in human history as they have all resulted in new issues and challenges within the context of law.

The limitations of existing legal structures, such as those pertaining to liability, privacy, and intellectual property, have already been exposed using inanimate Intelligent systems. With the introduction of AGI and its effect on our concepts of personhood, responsibility, and the structure of our economy, the introduction of ASI might conclude to challenge our very relationship with all types of intelligent systems (An issue that brings about questions not only from legal standpoints but also from philosophical or ethical perspectives).

Thus, the conclusion is that there is now a need to evolve the law to meet these challenges. To do so will require a technical understanding of the advances made in technology with respect to intelligent systems but also an understanding of human values and societal goals. Although there is much uncertainty in the development of AI, there is also legal uncertainty. Therefore, the challenge now is to prepare legal systems to successfully meet the challenges presented by this appeal to humanity.